Jenkins-K8S Charm

jenkins-k8s github src

jenkins-k8s charmstore

For the most part, I was able to follow along the lines of what was done with the other previous k8s charms.

I used filesystem storage for jenkins home https://github.com/jamesbeedy/caas/blob/jenkins_k8s/charms/jenkins/src/metadata.yaml#L22,L24, and defined the TCP ports in the podspec https://github.com/jamesbeedy/caas/blob/jenkins_k8s/charms/jenkins/src/reactive/spec_template.yaml#L7,L9

The docker image for jenkins is built from the upstream jenkins image:

from jenkins/jenkins:2.60.3

# Distributed Builds plugins

RUN /usr/local/bin/install-plugins.sh ssh-slaves

# install Notifications and Publishing plugins

RUN /usr/local/bin/install-plugins.sh email-ext

RUN /usr/local/bin/install-plugins.sh mailer

RUN /usr/local/bin/install-plugins.sh slack

# Artifacts

RUN /usr/local/bin/install-plugins.sh htmlpublisher

# UI

RUN /usr/local/bin/install-plugins.sh greenballs

RUN /usr/local/bin/install-plugins.sh simple-theme-plugin

# Scaling

RUN /usr/local/bin/install-plugins.sh kubernetes

# install Maven

USER root

RUN apt-get update && apt-get install -y maven

USER jenkins

Build the image

docker build . -t jamesbeedy/juju-jenkins-test:1.0

List images see that its there

$ docker image list

REPOSITORY TAG IMAGE ID CREATED SIZE

jamesbeedy/juju-jenkins-test 1.0 0f711167f01c 19 seconds ago 893MB

Build the charm

make build

Push to charmstore

charm push ./build/builds/jenkins-k8s jenkins-k8s --resource jenkins_image=jamesbeedy/juju-jenkins-test:1.0

Everything seems great at this point, although I’m unaware of what other components I may be missing here. I looked at the upstream pod spec for jenkins pod and it seemed pretty simple basically only defining a port, so thats what I did here.

Jenkins-K8S Deployment Process

# Bootstrap AWS

juju bootstrap aws

# Deploy K8S + AWS integrator

juju deploy cs:bundle/canonical-kubernetes-363

juju deploy cs:~containers/aws-integrator-8

# Trust aws-integrator and relate to k8s worker/master

juju trust aws-integrator

juju relate aws-integrator kubernetes-master

juju relate aws-integrator kubernetes-worker

Deployment settles and our k8s is live:

Model Controller Cloud/Region Version SLA Timestamp

default pdl-aws aws/us-west-2 2.5.0 unsupported 13:52:38-08:00

App Version Status Scale Charm Store Rev OS Notes

aws-integrator 1.15.71 active 1 aws-integrator jujucharms 8 ubuntu

easyrsa 3.0.1 active 1 easyrsa jujucharms 195 ubuntu

etcd 3.2.10 active 3 etcd jujucharms 338 ubuntu

flannel 0.10.0 active 5 flannel jujucharms 351 ubuntu

kubeapi-load-balancer 1.14.0 active 1 kubeapi-load-balancer jujucharms 525 ubuntu exposed

kubernetes-master 1.13.2 active 2 kubernetes-master jujucharms 542 ubuntu

kubernetes-worker 1.13.2 active 3 kubernetes-worker jujucharms 398 ubuntu exposed

Unit Workload Agent Machine Public address Ports Message

aws-integrator/1* active idle 11 172.31.102.27 ready

easyrsa/0* active idle 0 172.31.102.85 Certificate Authority connected.

etcd/0* active idle 1 172.31.102.95 2379/tcp Healthy with 3 known peers

etcd/1 active idle 2 172.31.102.73 2379/tcp Healthy with 3 known peers

etcd/2 active idle 3 172.31.102.151 2379/tcp Healthy with 3 known peers

kubeapi-load-balancer/0* active idle 4 172.31.102.181 443/tcp Loadbalancer ready.

kubernetes-master/0* active idle 5 172.31.102.4 6443/tcp Kubernetes master running.

flannel/0* active idle 172.31.102.4 Flannel subnet 10.1.63.1/24

kubernetes-master/1 active idle 6 172.31.102.144 6443/tcp Kubernetes master running.

flannel/1 active idle 172.31.102.144 Flannel subnet 10.1.2.1/24

kubernetes-worker/0* active idle 7 172.31.102.246 80/tcp,443/tcp Kubernetes worker running.

flannel/2 active idle 172.31.102.246 Flannel subnet 10.1.89.1/24

kubernetes-worker/1 active idle 8 172.31.102.192 80/tcp,443/tcp Kubernetes worker running.

flannel/4 active idle 172.31.102.192 Flannel subnet 10.1.34.1/24

kubernetes-worker/2 active idle 9 172.31.102.30 80/tcp,443/tcp Kubernetes worker running.

flannel/3 active idle 172.31.102.30 Flannel subnet 10.1.47.1/24

Machine State DNS Inst id Series AZ Message

0 started 172.31.102.85 i-08cc8c6a85f78d6dd bionic us-west-2a running

1 started 172.31.102.95 i-051a93407700bc598 bionic us-west-2a running

2 started 172.31.102.73 i-0663d1baf47487040 bionic us-west-2a running

3 started 172.31.102.151 i-0e84af70f8115f57b bionic us-west-2a running

4 started 172.31.102.181 i-04348b1b743d6048b bionic us-west-2a running

5 started 172.31.102.4 i-02541a9b9bc1efb8a bionic us-west-2a running

6 started 172.31.102.144 i-0998891271a632f3e bionic us-west-2a running

7 started 172.31.102.246 i-09ed2ca17685a2393 bionic us-west-2a running

8 started 172.31.102.192 i-0a74512e86695fbdc bionic us-west-2a running

9 started 172.31.102.30 i-0f488da3b1dfe618a bionic us-west-2a running

11 started 172.31.102.27 i-0ccfa6f129e704b7f bionic us-west-2a running

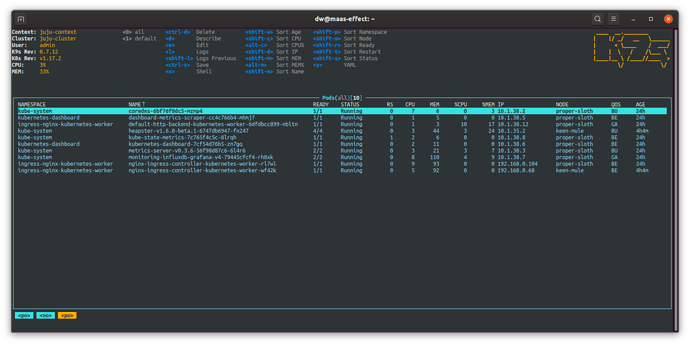

Now that we have K8S, time to create the Juju k8s model, provision storage, deploy k8s workload.

# Create the K8S cloud and K8S model

juju scp kubernetes-master/0:config ~/.kube/config

kubectl config view --raw | juju add-k8s myk8scloud --cluster-name=juju-cluster

juju add-model bdxk8smodel myk8scloud

# Create the storage pools (operator and application)

juju create-storage-pool operator-storage kubernetes \

storage-class=juju-operator-storage \

storage-provisioner=kubernetes.io/aws-ebs parameters.type=gp2

juju create-storage-pool k8s-ebs kubernetes \

storage-class=juju-ebs \

storage-provisioner=kubernetes.io/aws-ebs parameters.type=gp2

At this point Juju is configured to deploy the jenkins-k8s charm.

juju deploy cs:~jamesbeedy/jenkins-k8s-0 --storage jenkins-home=1G,k8s-ebs

Wait for the deployment to settle (errors through a bunch of volume creation statuses for a few minutes, but it finally settles).

$ juju status

Model Controller Cloud/Region Version SLA Timestamp

bdxk8smodel pdl-aws myk8scloud 2.5.0 unsupported 14:05:46-08:00

App Version Status Scale Charm Store Rev OS Address Notes

jenkins-k8s active 1 jenkins-k8s jujucharms 0 kubernetes 10.152.183.104

Unit Workload Agent Address Ports Message

jenkins-k8s/0* active idle 10.1.47.6 8080/TCP

$ juju storage

Unit Storage id Type Pool Size Status Message

jenkins-k8s/0 jenkins-home/0 filesystem k8s-ebs 42MiB attached Successfully provisioned volume pvc-94699c34-1e8d-11e9-876a-0694b7418256 using kubernetes.io/aws-ebs

kubectl shows me

$ kubectl get namespaces

NAME STATUS AGE

bdxk8smodel Active 32m

default Active 62m

ingress-nginx-kubernetes-worker Active 62m

kube-public Active 62m

kube-system Active 62m

$ kubectl get all --namespace bdxk8smodel

NAME READY STATUS RESTARTS AGE

pod/juju-jenkins-k8s-0 0/1 CrashLoopBackOff 10 31m

pod/juju-operator-jenkins-k8s-0 1/1 Running 0 32m

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/juju-jenkins-k8s ClusterIP 10.152.183.104 <none> 8080/TCP 31m

NAME READY AGE

statefulset.apps/juju-jenkins-k8s 0/1 31m

statefulset.apps/juju-operator-jenkins-k8s 1/1 32m

$ kubectl describe pods juju-jenkins-k8s-0 --namespace bdxk8smodel

Name: juju-jenkins-k8s-0

Namespace: bdxk8smodel

Node: ip-172-31-102-30.us-west-2.compute.internal/172.31.102.30

Start Time: Tue, 22 Jan 2019 13:35:19 -0800

Labels: controller-revision-hash=juju-jenkins-k8s-688b95b7bc

juju-application=jenkins-k8s

juju-controller-uuid=3c1f900d-e056-49dc-8d13-42621a2ae60c

juju-model-uuid=f08a52e5-9645-4f0c-876c-1d01597dc3dc

statefulset.kubernetes.io/pod-name=juju-jenkins-k8s-0

Annotations: <none>

Status: Running

IP: 10.1.47.6

Controlled By: StatefulSet/juju-jenkins-k8s

Containers:

jenkins-k8s:

Container ID: docker://bffdda745ae842a240e68563b7895966b0a63b37b12875c48d78f684ac10c06d

Image: registry.jujucharms.com/jamesbeedy/jenkins-k8s/jenkins_image@sha256:b7d5a693fd458c45178f4793dc5fe2695657f74111885106c36b9f3bd67e68f9

Image ID: docker-pullable://registry.jujucharms.com/jamesbeedy/jenkins-k8s/jenkins_image@sha256:b7d5a693fd458c45178f4793dc5fe2695657f74111885106c36b9f3bd67e68f9

Port: 8080/TCP

Host Port: 0/TCP

State: Waiting

Reason: CrashLoopBackOff

Last State: Terminated

Reason: Error

Exit Code: 1

Started: Tue, 22 Jan 2019 17:27:55 -0800

Finished: Tue, 22 Jan 2019 17:27:55 -0800

Ready: False

Restart Count: 50

Environment: <none>

Mounts:

/var/jenkins_home from juju-jenkins-home-0 (rw)

/var/run/secrets/kubernetes.io/serviceaccount from default-token-sh6f2 (ro)

Conditions:

Type Status

Initialized True

Ready False

ContainersReady False

PodScheduled True

Volumes:

juju-jenkins-home-0:

Type: PersistentVolumeClaim (a reference to a PersistentVolumeClaim in the same namespace)

ClaimName: juju-jenkins-home-0-juju-jenkins-k8s-0

ReadOnly: false

default-token-sh6f2:

Type: Secret (a volume populated by a Secret)

SecretName: default-token-sh6f2

Optional: false

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute for 300s

node.kubernetes.io/unreachable:NoExecute for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning BackOff 2m12s (x1082 over 3h56m) kubelet, ip-172-31-102-30.us-west-2.compute.internal Back-off restarting failed container

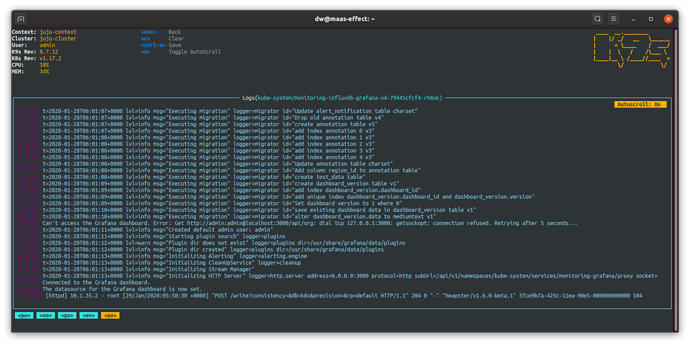

After all is said and done, I’m left wondering how to better introspect the CrashLoopBackOff, and how to configure ingress networking to my application.

For the CrashLoopBackOff, I think I’m best off researching how to debug kubernetes applications.

For the ingress stuff, possibly there a doc/spec on how ingress is done somewhere?

Thanks!