Introduction

This mini-tutorial will guide you through the necessary steps for defining private subnets on AWS, associating them with a juju space and finally, deploying a charm on a machine that is assigned an IP address from the space you just created.

Before we begin, you first need to bootstrap a controller on AWS. You can do this by running:

juju bootstrap aws test --credential $your_aws_credential_name

Creating a new private subnet on your AWS VPC

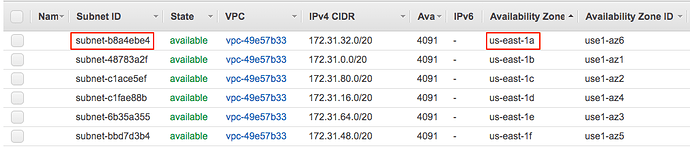

To create the new subnet we need to do some preparation work using the AWS console. Let’s start by pointing your browser at the list of subnets on the VPC used by the bootstrapped controller.

Select any one of the public subnets that are listed there and make a mental note of the subnet’s ID and availability zones. For this example, I chose subnet b8a4ebe4 at us-east-1a.

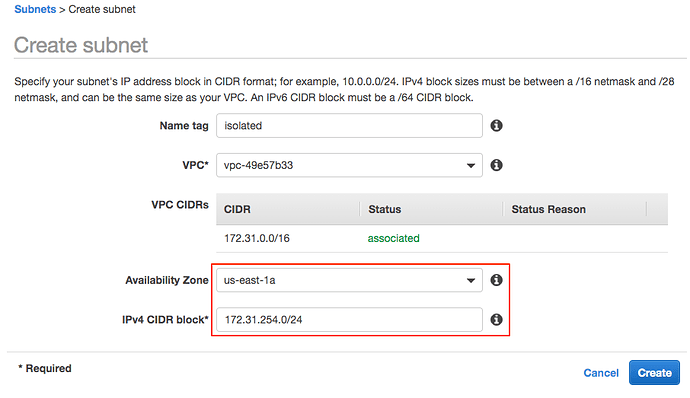

Next, click the Create Subnet button. In the following dialog, enter a name for your subnet (I chose isolated), select your juju VPC from the list and the availability zone that matches the public subnet you selected in the previous step. Then, enter the CIDR for your private subnet. For this example, I chose 172.31.254.0/24.

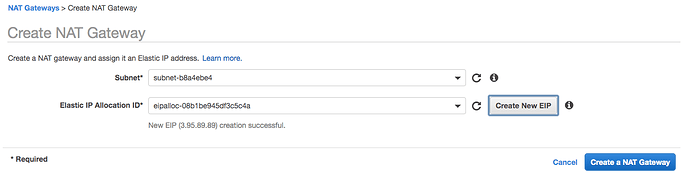

In the previous step, we have created a private subnet and defined a CIDR for it. We can spin up machines in this subnet and they will receive an IP in the range we just defined. There is however, one small caveat that we need to address: the subnet has no egress! When juju tries to start a machine in the subnet and attempts to execute any operation that requires Internet access (e.g. apt-get update) that operation will fail.

To rectify this issue, we need to add a NAT gateway to a public subnet in the same AZ as the private subnet and install a routing table for our private subnet that uses the NAT gateway for egress traffic. To set this up, navigate to the NAT Gateways section of the console and click the Create NAT Gateway button. Select the public subnet in us-east-1 for the NAT (b8a4ebe4 in this example) and assign an existing (or allocate a new) elastic IP to it.

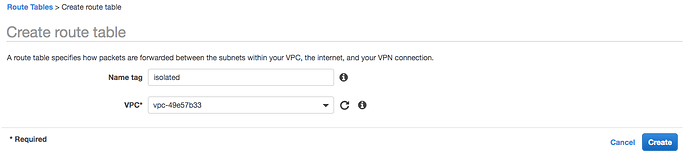

Once the NAT gateway spins up, visit the Route Tables section and click the Create route table button. In the dialog that pops up specify a name for the routing table (I used isolated) and assign it to your VPC ID.

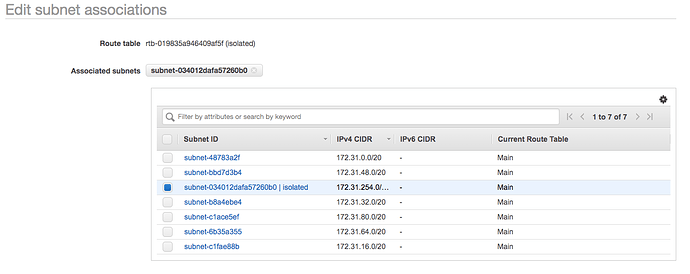

Next, we need to associate the new routing table with our private subnet. Select the isolated routing table and click the Subnet Associations tab at the bottom of your screen. Click the Edit subnet associations button select the isolated subnet from the list.

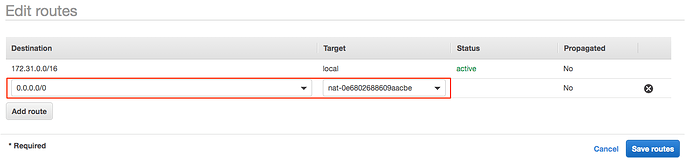

The last thing we need to do is to add a “catch-all” route entry to the new routing table for sending all egress traffic to the NAT we have just created. To do this, click on the Routes tab and then click the Edit routes button. Add a new entry with destination 0.0.0.0/0 and select the NAT gateway as its target.

Define a space and deploy something to it

To make juju aware of the subnet we just created and to create a space for it we can run the following commands:

$ juju reload-spaces

$ juju add-space isolated 172.31.254.0/24

Finally, we can use provide a space constraint when deploying a charm to ensure that its units are placed in the space we have just created:

$ juju deploy mysql --constraints spaces=isolated

Note that due to the way that juju deals with multi-AZ setups, it will take a a bit of time before a new machine can be started in the correct AZ/subnet combination. If you are monitoring juju status output don’t be alarmed by the AZ-related errors that you might see. Just be patient; the machine will eventually spin up and the charm will be deployed.

Eventually, juju status will show the unit as active. As you can see in the status output below, the unit has been assigned with IP from the isolated subnet’s CIDR.

$ juju status

Model Controller Cloud/Region Version SLA Timestamp

default aws-us-east-1 aws/us-east-1 2.6.9 unsupported 11:13:58+01:00

App Version Status Scale Charm Store Rev OS Notes

mysql 5.7.27 active 1 mysql jujucharms 58 ubuntu

Unit Workload Agent Machine Public address Ports Message

mysql/0* active idle 0 172.31.254.16 3306/tcp Ready

Machine State DNS Inst id Series AZ Message

0 started 172.31.254.16 i-08920c50ec1f39cd4 xenial us-east-1a running

What if I need to ssh into a machine with a private IP?

Establishing an ssh connection into a machine that has been assigned a private subnet IP using the juju ssh command is not currently possible. While the new subnet does have an egress connection to the Internet, there isn’t a way for us to reach that subnet from outside the VPC.

Obviously, the security groups in place allow the controller to talk to it. In addition we can ssh into the controller machine since it has been assigned a public IP. The solution for accessing machines in private subnets is to use the controller machine as a jump-box.

We will first need to ssh into the controller and from there ssh to the actual box using our local juju client’s ssh credentials. This can be achieved with a single ssh command invocation if we specify the appropriate ProxyCommand option.

The proposed approach requires us to figure out the IP addresses of both the controller and the actual machine we need to access. To save you some time I have created the following bash function which you can add to your .bashrc (or .zshrc):

function juju-proxy-ssh {

if [[ $# -ne 1 ]]; then

echo "Usage: juju-proxy-ssh machine_number"

return

fi

controller_ip=`juju show-controller | grep api-endpoints | cut -d\' -f2 | cut -d: -f1`

machine_ip=`juju show-machine $1 --format tabular | grep started | awk '{print $3}'`

echo "Using controller box at $controller_ip as a jump-box for accessing machine $1 at $machine_ip"

ssh -i ~/.local/share/juju/ssh/juju_id_rsa \

-o StrictHostKeyChecking=no -o UserKnownHostsFile=/dev/null \

-o ProxyCommand="ssh -i ~/.local/share/juju/ssh/juju_id_rsa -o StrictHostKeyChecking=no -o UserKnownHostsFile=/dev/null ubuntu@$controller_ip nc %h %p" \

ubuntu@$machine_ip

}

After sourcing the above function, all you need to do is to type juju-proxy-ssh and provide it with the machine ID (from the currently active model) that you wish to connect to:

$ juju-proxy-ssh 0

Using controller box at 3.90.139.110 as a jump-box for accessing machine 0 at 172.31.254.16

Warning: Permanently added '3.90.139.110' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.31.254.16' (ECDSA) to the list of known hosts.

Welcome to Ubuntu 16.04.6 LTS (GNU/Linux 4.4.0-1092-aws x86_64)

* Documentation: https://help.ubuntu.com

* Management: https://landscape.canonical.com

* Support: https://ubuntu.com/advantage

0 packages can be updated.

0 updates are security updates.

New release '18.04.2 LTS' available.

Run 'do-release-upgrade' to upgrade to it.

*** System restart required ***

Last login: Mon Sep 30 08:36:33 2019 from 172.31.33.185

To run a command as administrator (user "root"), use "sudo <command>".

See "man sudo_root" for details.

ubuntu@ip-172-31-254-16:~$ logout

Connection to 172.31.254.16 closed.