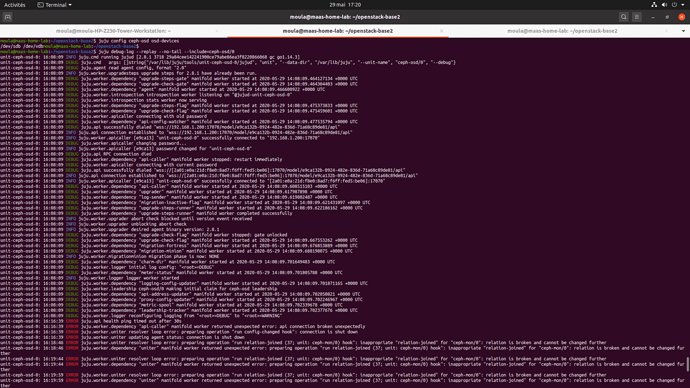

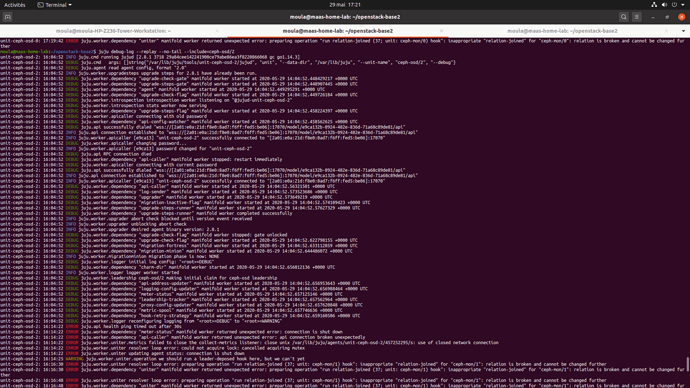

I am trying to deploy: the last openstack-base bundle, everything works except the Ceph cluster !!! There is only one ceph-osd created !!! I tried several times but it is always the same result. I tried to do it manually with ceph-volume. I manage to do it but no integration with the ceph-mon cluster.

Thank’s.

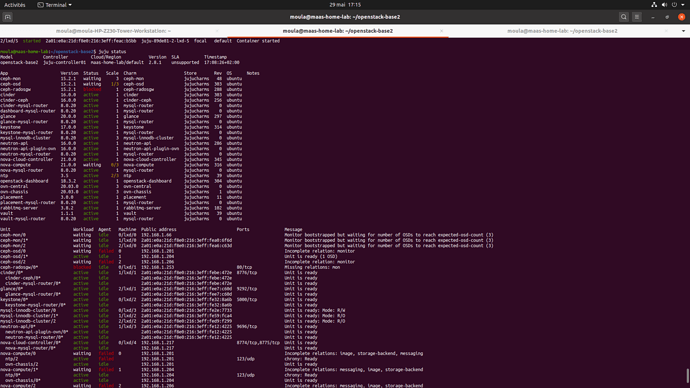

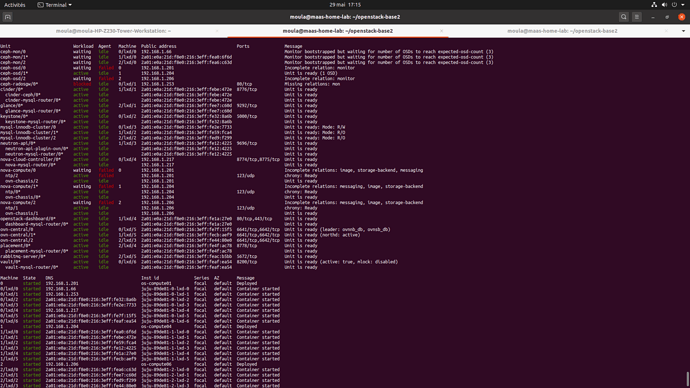

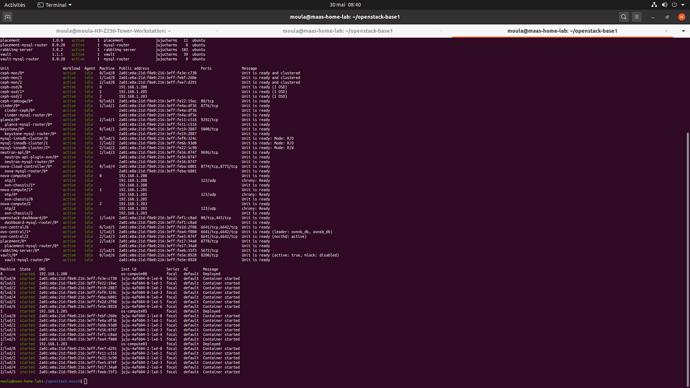

juju status :

Unit Workload Agent Machine Public address Ports Message

ceph-mon/0 waiting idle 0/lxd/0 2a01:e0a:21d:f8e0:216:3eff:feeb:127 Monitor bootstrapped but waiting for number of OSDs to reach expected-osd-count (3)

ceph-mon/1* waiting idle 1/lxd/0 2a01:e0a:21d:f8e0:216:3eff:fe1a:83f1 Monitor bootstrapped but waiting for number of OSDs to reach expected-osd-count (3)

ceph-mon/2 waiting idle 2/lxd/0 2a01:e0a:21d:f8e0:216:3eff:fe44:6d6 Monitor bootstrapped but waiting for number of OSDs to reach expected-osd-count (3)

ceph-osd/0 waiting failed 0 192.168.1.208 Incomplete relation: monitor

ceph-osd/1* active idle 1 192.168.1.205 Unit is ready (1 OSD)

ceph-osd/2 waiting failed 2 192.168.1.203 Incomplete relation: monitor

ceph-radosgw/0* blocked idle 0/lxd/1 2a01:e0a:21d:f8e0:216:3eff:fef1:c5b2 80/tcp Missing relations: mon

cinder/0* active idle 1/lxd/1 2a01:e0a:21d:f8e0:216:3eff:fe6b:32a 8776/tcp Unit is ready

cinder-ceph/0* waiting idle 2a01:e0a:21d:f8e0:216:3eff:fe6b:32a Incomplete relations: ceph

cinder-mysql-router/0* active idle 2a01:e0a:21d:f8e0:216:3eff:fe6b:32a Unit is ready

glance/0* active idle 2/lxd/1 2a01:e0a:21d:f8e0:216:3eff:fe54:6dcb 9292/tcp Unit is ready

glance-mysql-router/0* active idle 2a01:e0a:21d:f8e0:216:3eff:fe54:6dcb Unit is ready

keystone/0* active idle 0/lxd/2 2a01:e0a:21d:f8e0:216:3eff:fe63:2b72 5000/tcp Unit is ready

keystone-mysql-router/0* active idle 2a01:e0a:21d:f8e0:216:3eff:fe63:2b72 Unit is ready

mysql-innodb-cluster/0 active idle 0/lxd/3 2a01:e0a:21d:f8e0:216:3eff:fe7c:578d Unit is ready: Mode: R/O

mysql-innodb-cluster/1* active idle 1/lxd/2 2a01:e0a:21d:f8e0:216:3eff:fe1a:6ce Unit is ready: Mode: R/W

mysql-innodb-cluster/2 active idle 2/lxd/2 2a01:e0a:21d:f8e0:216:3eff:fe87:ff47 Unit is ready: Mode: R/O

neutron-api/0* active idle 1/lxd/3 2a01:e0a:21d:f8e0:216:3eff:fef2:dd7d 9696/tcp Unit is ready

neutron-api-plugin-ovn/0* active idle 2a01:e0a:21d:f8e0:216:3eff:fef2:dd7d Unit is ready

neutron-mysql-router/0* active idle 2a01:e0a:21d:f8e0:216:3eff:fef2:dd7d Unit is ready

nova-cloud-controller/0* active idle 0/lxd/4 2a01:e0a:21d:f8e0:216:3eff:fefd:5ebd 8774/tcp,8775/tcp Unit is ready

nova-mysql-router/0* active idle 2a01:e0a:21d:f8e0:216:3eff:fefd:5ebd Unit is ready

nova-compute/0 waiting idle 0 192.168.1.208 Incomplete relations: storage-backend

ntp/2 active idle 192.168.1.208 123/udp chrony: Ready

ovn-chassis/2 active idle 192.168.1.208 Unit is ready

nova-compute/1* waiting idle 1 192.168.1.205 Incomplete relations: storage-backend

ntp/0* active idle 192.168.1.205 123/udp chrony: Ready

ovn-chassis/0* active idle 192.168.1.205 Unit is ready

nova-compute/2 waiting idle 2 192.168.1.203 Incomplete relations: storage-backend

ntp/1 active idle 192.168.1.203 123/udp chrony: Ready

ovn-chassis/1 active idle 192.168.1.203 Unit is ready

openstack-dashboard/0* active idle 1/lxd/4 2a01:e0a:21d:f8e0:216:3eff:fe18:a9b5 80/tcp,443/tcp Unit is ready

dashboard-mysql-router/0* active idle 2a01:e0a:21d:f8e0:216:3eff:fe18:a9b5 Unit is ready

ovn-central/0 active idle 0/lxd/5 192.168.1.224 6641/tcp,6642/tcp Unit is ready

ovn-central/1* active idle 1/lxd/5 2a01:e0a:21d:f8e0:216:3eff:fe9a:ae00 6641/tcp,6642/tcp Unit is ready (leader: ovnnb_db, ovnsb_db northd: active)

ovn-central/2 active idle 2/lxd/3 2a01:e0a:21d:f8e0:216:3eff:fee3:9a20 6641/tcp,6642/tcp Unit is ready

placement/0* active idle 2/lxd/4 2a01:e0a:21d:f8e0:216:3eff:fefe:d014 8778/tcp Unit is ready

placement-mysql-router/0* active idle 2a01:e0a:21d:f8e0:216:3eff:fefe:d014 Unit is ready

rabbitmq-server/0* active idle 2/lxd/5 2a01:e0a:21d:f8e0:216:3eff:fe0e:291b 5672/tcp Unit is ready

vault/0* active idle 0/lxd/6 2a01:e0a:21d:f8e0:216:3eff:fe23:1c55 8200/tcp Unit is ready (active: true, mlock: disabled)

vault-mysql-router/0* active idle 2a01:e0a:21d:f8e0:216:3eff:fe23:1c55 Unit is ready