Has anyone been able to boostrap a localhost (LXD) controller on Arch Linux?

I get the following error regardless of whether I’m using the lxd snap (which I tried with first), or with the community package of lxd (configured for unprivileged containers). Both versions of lxd are able to launch a focal container with no problems.

I’ve tried on both hardware install and vm install (virtualbox), no dice with either.

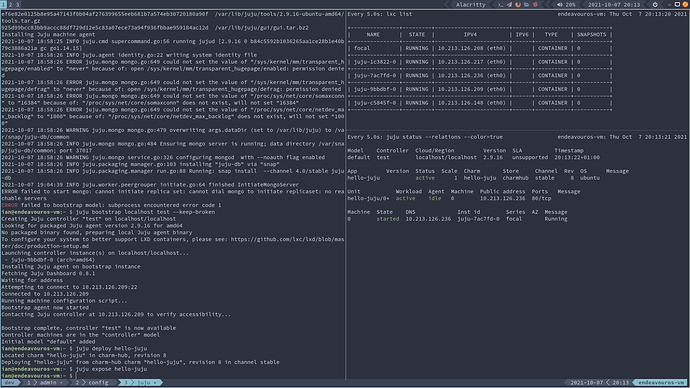

Installing Juju machine agent

2021-10-04 19:41:18 INFO juju.cmd supercommand.go:56 running jujud [2.9.15 0 6a0461b47391cdb3464418f3eb58928d65a26773 gc go1.14.15]

2021-10-04 19:41:18 INFO juju.agent identity.go:22 writing system identity file

2021-10-04 19:41:18 ERROR juju.mongo mongo.go:649 could not set the value of "/proc/sys/net/core/somaxconn" to "16384" because of: "/proc/sys/net/core/somaxconn" does not exist, will not set "16384"

2021-10-04 19:41:18 ERROR juju.mongo mongo.go:649 could not set the value of "/proc/sys/net/core/netdev_max_backlog" to "1000" because of: "/proc/sys/net/core/netdev_max_backlog" does not exist, will not set "1000"

2021-10-04 19:41:18 ERROR juju.mongo mongo.go:649 could not set the value of "/sys/kernel/mm/transparent_hugepage/enabled" to "never" because of: open /sys/kernel/mm/transparent_hugepage/enabled: permission denied

2021-10-04 19:41:18 ERROR juju.mongo mongo.go:649 could not set the value of "/sys/kernel/mm/transparent_hugepage/defrag" to "never" because of: open /sys/kernel/mm/transparent_hugepage/defrag: permission denied

2021-10-04 19:41:18 WARNING juju.mongo mongo.go:479 overwriting args.dataDir (set to /var/lib/juju) to /var/snap/juju-db/common

2021-10-04 19:41:18 INFO juju.mongo mongo.go:484 Ensuring mongo server is running; data directory /var/snap/juju-db/common; port 37017

2021-10-04 19:41:18 WARNING juju.mongo service.go:326 configuring mongod with --noauth flag enabled

2021-10-04 19:41:18 INFO juju.packaging manager.go:103 installing "juju-db" via "snap"

2021-10-04 19:41:18 INFO juju.packaging.manager run.go:88 Running: snap install --channel 4.0/stable juju-db

ERROR failed to start mongo: juju-db snap not installed correctly. Executable /snap/bin/juju-db.mongod not found

ERROR failed to bootstrap model: subprocess encountered error code 1

Above was using:

- stable juju snap v2.9.15

- stable lxd snap v4.18