I have the kubeflow (following your quick guide with ubuntu 20.04) stucked facing this problem: registry.jujucharms.com/kubeflow-charmers/kfp-viz/oci-image@sha256:c90a5818043da47448c4230953b265a66877bd143e4bdd991f762cf47e2a16d6 is not uploding. Running the URL directly in the browser reveals a 404 error, also pinging the address it do not respond .

@alfax1962 thanks for the message. I just ran through everything from scratch again and the only thing I needed that was outside the tutorial was to patch a role for the istio-ingressgateway charm using:

kubectl patch role -n kubeflow istio-ingressgateway-operator -p '{"apiVersion":"rbac.authorization.k8s.io/v1","kind":"Role","metadata":{"name":"istio-ingressgateway-operator"},"rules":[{"apiGroups":["*"],"resources":["*"],"verbs":["*"]}]}'

Did you use the --agent-version="2.9.22" as @dominik.f mentioned? Apart from that I’m not sure what else might be going wrong. If you could provide juju status and juju debug-log info for the failing charm there might be something helpful there. I’d also suggest doing a sudo snap remove microk8s --purge and trying again - perhaps something was left in microk8s that interacted with this?

Thank you @ca-scribner very much for your prompt reply. All was solved. The problem was my network: the site didn’t answer in the timing required by kubernetes. After some hours the pod initialized correctly. Many regards

You’re welcome! Glad to hear it

The controller can work with different

models, which map to namespaces in Kubernetes. It is recommended to set up a specific model for Kubeflow:

this is not in fact the case. if you pick any name besides kubeflow for the model… the kubeflow desktop unit errors out… just heads up

Yeah sorry I thought we had that model name issue covered in this guide, but must have been an old one. Atm there’s a hard-coded assumption in the upstream kubeflow dashboard code that expects kubeflow to deployed in the k8s namespace kubeflow.

ah, no worries…

Any idea on how or where to access spark in the full bundle ? Posted a ticket to the github here

https://github.com/canonical/bundle-kubeflow/issues/453

I am a total k8s newb so perhaps it’s in the full version but not called out explicitly in the application names or ?

We all start as newbs

I see @dominik.f replied on the issue, but I also subscribed to it so if his suggestion doesn’t work out reply and we can try to sort it out

thank you Andrew, I have actually hit a bug it seems… so I need to tear down the controller and start from scratch… once that’s done I will retry

the bug is described here Bug #1968105 “Juju+microk8s: very weird behaviour” : Bugs : juju

edit: I’ve reconnected my client / controller and done the juju deploy spark-k8s

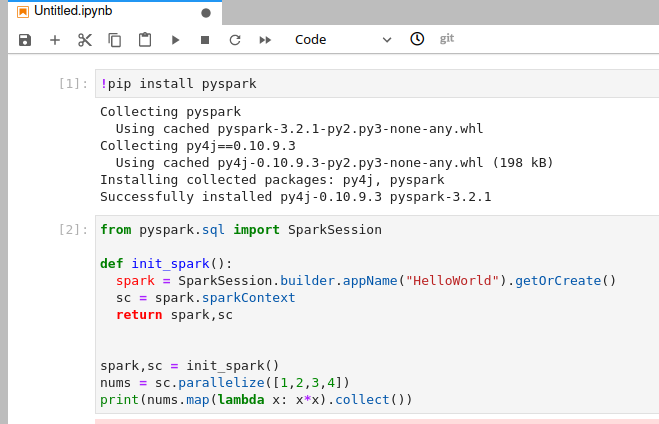

from there I am a bit lost… going to try to just … load a basic pyspark session… there’s not much documentation on the charmhub about this tho

Edit2: hmmm I seem to have notebooks just scheduling but never getting completed… my juju status shows an error on dex-auth/2

hook failed: "ingress-relation-broken"

Im going to just tear down and start from scratch… and then at the end add juju deploy spark-k8s and retry

Edit3: well after stopping and restarted the notebook it completed… I then tried a basic hello world NB with pyspark… I am assuming I need to set some… environment variables and point now to the spark-k8s application/unit in juju?

I’ve been trying to port forward or create tunnel to access this dashboard since I installed kubeflow on my server. with the socks tunnel I get an error about the hostname not having an ip associated with it and I don’t know exactly how to deal with it

Hey, try this tutorial for setting up remote access to Kubeflow - Set up remote access | Charmed Kubeflow - hopefully it will help get you going

Rob

Hey, Is there a tutorial about how to use openebs as a default storage class in kubeflow ?

I am looking forward to your reply.

I tried to install kubeflow 1.6 on microk8s 1.22 over a few weeks but I do not succeed. I followed this quickguide and now all containers are up and running, but I cannot access the Web-UI with http://10.64.140.43.nip.io.

Further investigation showed that there is no gateway configured.

microk8s kubectl get gateway -A

so I tried this fix

cat <<EOF | kubectl create -f -

apiVersion: networking.istio.io/v1beta1

kind: Gateway

metadata:

labels:

app.istio-pilot.io/is-workload-entity: "true"

app.juju.is/created-by: istio-pilot

name: kubeflow-gateway

namespace: kubeflow

resourceVersion: "2203"

spec:

selector:

istio: ingressgateway

servers:

- hosts:

- '*'

port:

name: http

number: 80

protocol: HTTP

EOF

but I got this error

error: error validating "STDIN": error validating data: [ValidationError(Gateway.metadata): unknown field "selector" in io.k8s.apimachinery.pkg.apis.meta.v1.ObjectMeta, ValidationError(Gateway.metadata): unknown field "servers" in io.k8s.apimachinery.pkg.apis.meta.v1.ObjectMeta]; if you choose to ignore these errors, turn validation off with --validate=false

My installation:

Model Controller Cloud/Region Version SLA Timestamp

kubeflow microk8s-localhost microk8s/localhost 2.9.35 unsupported 23:22:56+02:00

App Version Status Scale Charm Channel Rev Address Exposed Message

admission-webhook res:oci-image@84a4d7d active 1 admission-webhook 1.6/stable 50 10.152.183.210 no

argo-controller res:oci-image@669ebd5 active 1 argo-controller 3.3/stable 99 no

dex-auth active 1 dex-auth 2.31/stable 129 10.152.183.230 no

istio-ingressgateway active 1 istio-gateway 1.11/stable 114 10.152.183.183 no

istio-pilot active 1 istio-pilot 1.11/stable 131 10.152.183.252 no

jupyter-controller res:oci-image@8f4ec33 active 1 jupyter-controller 1.6/stable 138 no

jupyter-ui res:oci-image@cde6632 active 1 jupyter-ui 1.6/stable 99 10.152.183.198 no

kfp-api res:oci-image@1b44753 active 1 kfp-api 2.0/stable 81 10.152.183.36 no

kfp-db mariadb/server:10.3 active 1 charmed-osm-mariadb-k8s latest/stable 35 10.152.183.47 no ready

kfp-persistence res:oci-image@31f08ad active 1 kfp-persistence 2.0/stable 76 no

kfp-profile-controller res:oci-image@d86ecff active 1 kfp-profile-controller 2.0/stable 61 10.152.183.142 no

kfp-schedwf res:oci-image@51ffc60 active 1 kfp-schedwf 2.0/stable 80 no

kfp-ui res:oci-image@55148fd active 1 kfp-ui 2.0/stable 80 10.152.183.236 no

kfp-viewer res:oci-image@7190aa3 active 1 kfp-viewer 2.0/stable 79 no

kfp-viz res:oci-image@67e8b09 active 1 kfp-viz 2.0/stable 74 10.152.183.212 no

kubeflow-dashboard res:oci-image@6fe6eec active 1 kubeflow-dashboard 1.6/stable 154 10.152.183.245 no

kubeflow-profiles res:profile-image@0a46ffc active 1 kubeflow-profiles 1.6/stable 82 10.152.183.168 no

kubeflow-roles active 1 kubeflow-roles 1.6/stable 31 10.152.183.193 no

kubeflow-volumes res:oci-image@cc5177a active 1 kubeflow-volumes 1.6/stable 64 10.152.183.141 no

metacontroller-operator active 1 metacontroller-operator 2.0/stable 48 10.152.183.178 no

minio res:oci-image@1755999 active 1 minio ckf-1.6/stable 99 10.152.183.2 no

oidc-gatekeeper res:oci-image@32de216 active 1 oidc-gatekeeper ckf-1.6/stable 76 10.152.183.79 no

seldon-controller-manager res:oci-image@eb811b6 active 1 seldon-core 1.14/stable 92 10.152.183.253 no

training-operator active 1 training-operator 1.5/stable 65 10.152.183.211 no

Unit Workload Agent Address Ports Message

admission-webhook/0* active idle 10.1.85.150 4443/TCP

argo-controller/0* active idle 10.1.85.186

dex-auth/0* active idle 10.1.85.146

istio-ingressgateway/0* active idle 10.1.85.148

istio-pilot/0* active idle 10.1.85.149

jupyter-controller/0* active idle 10.1.85.175

jupyter-ui/0* active idle 10.1.85.178 5000/TCP

kfp-api/0* active idle 10.1.85.188 8888/TCP,8887/TCP

kfp-db/0* active idle 10.1.85.160 3306/TCP ready

kfp-persistence/0* active idle 10.1.85.187

kfp-profile-controller/0* active idle 10.1.85.184 80/TCP

kfp-schedwf/0* active idle 10.1.85.163

kfp-ui/0* active idle 10.1.85.189 3000/TCP

kfp-viewer/0* active idle 10.1.85.169

kfp-viz/0* active idle 10.1.85.182 8888/TCP

kubeflow-dashboard/0* active idle 10.1.85.172 8082/TCP

kubeflow-profiles/0* active idle 10.1.85.167 8080/TCP,8081/TCP

kubeflow-roles/0* active idle 10.1.85.152

kubeflow-volumes/0* active idle 10.1.85.171 5000/TCP

metacontroller-operator/0* active idle 10.1.85.153

minio/0* active idle 10.1.85.166 9000/TCP,9001/TCP

oidc-gatekeeper/0* active idle 10.1.85.185 8080/TCP

seldon-controller-manager/0* active idle 10.1.85.174 8080/TCP,4443/TCP

training-operator/0* active idle 10.1.85.155

It seems this fix is not working with microk8s Version 1.22.

Does anyone have an idea how to get kubeflow up and running?

Hello @ried Thank you for raising this. This command should force the charm to create the gateway by itself:

juju run --unit istio-pilot/0 -- "export JUJU_DISPATCH_PATH=hooks/config-changed; ./dispatch"

If it does not work the first time please try executing again. We will also update the quickstart guide with this new command.

I’m having the same issue with being unable to access the dashboard as others, copy/pasting the exact commands above on Ubuntu 20.04.

I have a gateway:

NAMESPACE NAME AGE

kubeflow kubeflow-gateway 57m

If I use this I can access the dashboard via localhost:9092, but as others have noted the subpages don’t work:

kubectl -n kubeflow port-forward svc/kubeflow-dashboard 9092:8082

I see 10.64.140.43 assigned to the external IP:

istio-ingressgateway-workload LoadBalancer 10.152.183.198 10.64.140.43 80:30680/TCP,443:31675/TCP 23h

Snap info for microk8s shows v1.22.15.

When I navigate to 10.64.140.43 in my browser, it redirects to 10.64.140.43.nip.io with some request params in the URL, but my browser shows: “Hmm. We’re having trouble finding that site.”

Running busybox in the namespace, I can wget http://10.64.140.43.nip.io and appear to get a login page, so that’s presumably what I’m looking for? But I don’t see it when accessing from a local browser outside busybox. Note this is not remote access, this is the same machine as my microk8s cluster.

Juju status shows everything green and idle.

Given this is a vanilla Ubuntu distro and local access, and I copy/pasted the commands exactly, I’d expect this to just work? But I did need to blast away the kubernetes cluster and rebuild once in the process due to the disk filling, and juju not cleaning up very well.

Help!

Hi @mmattb,

Sorry you’re having troubles. I’m not sure what is going wrong, but at least have a few questions.

In your post you said " When I navigate to 10.64.140.43 in my browser, it redirects to 10.64.140.43.nip.io" - did you mean the reverse? I’d expect 10.64.140.43.nip.io to redirect to the gateway at 10.64.140.43 (making everything look as if you’d gone to a public website with that name). I think based on your message you did it correctly but just had it backwards in the post, but fingers crossed this sorts everything out.

If that doesn’t help, I wonder if something gets in the way of how nip.io functions. I’m not an expert on DNS and such things, but I believe modem, router, and ISP configuration among other things can block what nip.io is doing. Can you test nip.io with something else in your network, to confirm it is the problem? I see posts like this git issue talking about solutions - trying this one or searching for other generic nip.io fixes might help.

Andrew

Looks like the tutorial for setting up remote access has been moved to this URL:

Hi,

Did you find a solution to this issue. I’ve followed the steps for a local install and cannot access the dashboard at http://10.64.140.43.nip.io/. It just times out.

Any guidance would be much appreciated!

Ben

Hey @pashleb,

Sorry you’re having trouble. Have you looked into the juju status or kubectl get pod -n kubeflow? That might have some clues on where to troubleshoot. Feel free to post them here, or even better open an issue on our github issue board so we can dig into it further.

I deployed KUbeflow 1.7 and UI was accesible with all functions working properly. After upgrading to KUbeflow 1.8 UI is not accessible anymore. All units are running with no error in juju debug-log. Could you please advise on what to check?

ubuntu@node06:~$ microk8s kubectl get gateway -A

NAMESPACE NAME AGE kubeflow istio-gateway 11h knative-serving knative-ingress-gateway 5h11m knative-serving knative-local-gateway 5h11m

Service details: istio-ingressgateway-workload LoadBalancer 10.152.183.20 172.27.80.162 80:30448/TCP,443:32371/TCP 11h